Kubernetes Posture Made Simple With Polaris

An interesting choice for safer clusters

Working as a solutions architect while going deep on Kubernetes security — prevention-first thinking, open source tooling, and a daily rabbit hole of hands-on learning. I make the mistakes, then figure out how to fix them (eventually).

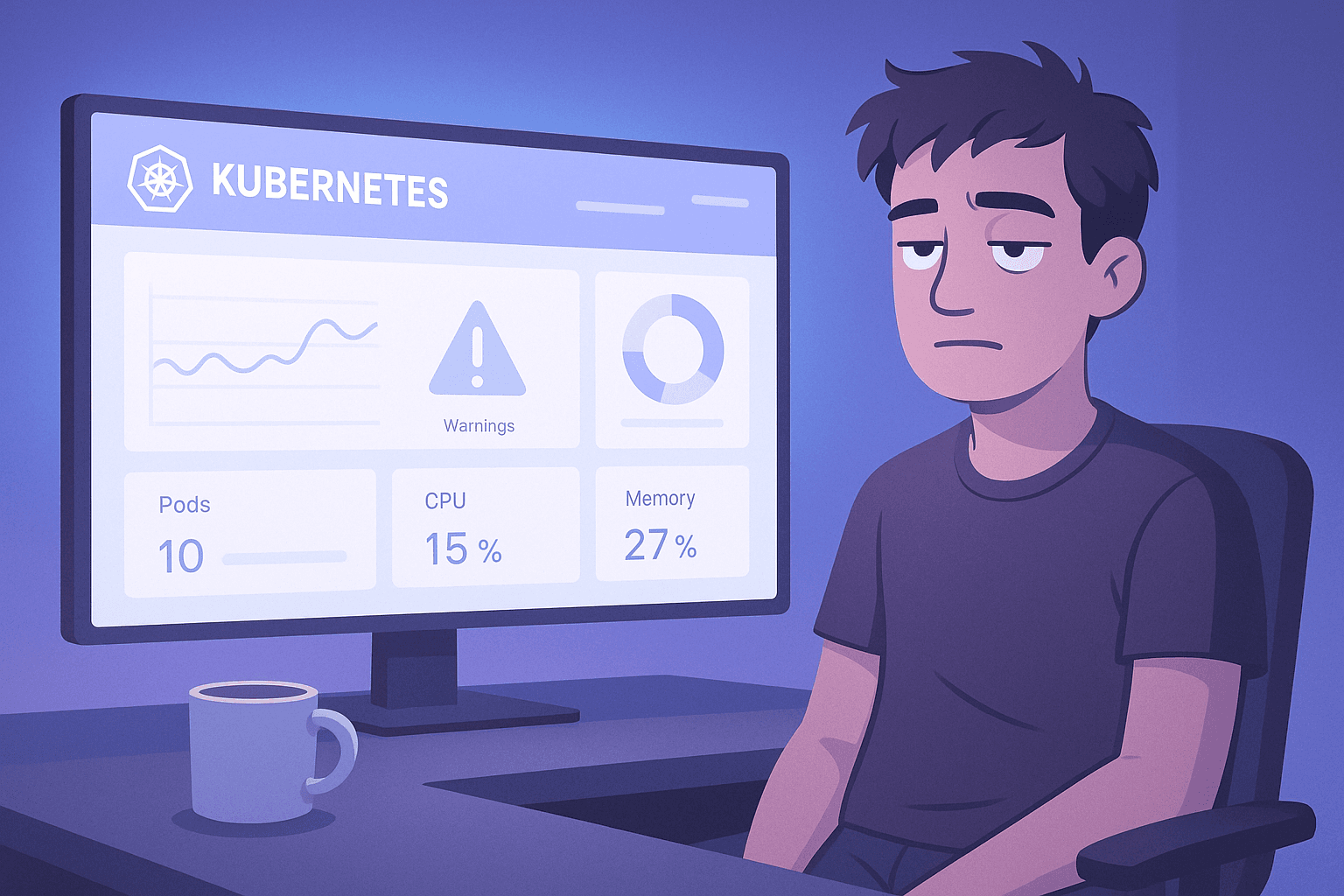

Kubernetes has slim pickings when it comes to open source “posture tools.” We’ve already looked at kube-bench, which is not terrible. So, still wandering the landscape in search of the Holy Grail, we’re now turning to Fairwinds Polaris.

Polaris tries to cover three angles:

A dashboard that shows you workload posture issues (nothing groundbreaking, but workable)

An admission controller that can… well, do admission controller things

A CLI/CI scanner that flags obvious problems before you unleash them on a cluster

The dashboard is… fine. Polished enough, just not particularly inspired. The checks behind it are solid, and the fact that you get dashboard + AC + pipeline scanning in one lightweight package is, objectively, something. It’s worth noting that Polaris mixes in a fair number of non-security checks as well, which we’ll take a look at.

But here’s the honest tl;dr: Polaris is useful, but it’s not exactly the kind of tool you rearrange your security stack for. The juice isn't quite worth the squeeze. Still, it's worth a look, if only to confirm that feeling.

Installing Polaris

Polaris ships as a Helm chart, and the install process is easy. If all you care about is seeing the dashboard and getting a quick read on your workload posture, this is the simplest path.

1. Add the Fairwinds repo

helm repo add fairwinds-stable https://charts.fairwinds.com/stable

helm repo update

2. Construct a values file

This is one of the nicer parts of Polaris: with just a few settings you can get a clean NodePort service for the dashboard and a safely scoped admission controller. The webhook runs in Fail mode and only for namespaces labeled with ac-land, which we’ll set up later for testing.

Save the following as values.yaml:

dashboard:

service:

type: NodePort

webhook:

enable: true

validate: true

mutate: false

failurePolicy: Fail

namespaceSelector:

matchExpressions:

- key: ac-land

operator: Exists

3. Install Polaris (dashboard and admission control enabled)

helm upgrade --install polaris fairwinds-stable/polaris --namespace polaris --create-namespace -f values.yaml

This gives you the Deployment, Service, RBAC, and all the usual Helm chart trimmings.

4. Accessing the Dashboard

Grab the IP address via the NodePort service we now have.

matt@cp:~/fairwinds/polaris$ kubectl get svc -n polaris

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

polaris-dashboard NodePort 10.97.42.83 <none> 80:32423/TCP 5m46s

polaris-webhook ClusterIP 10.104.102.78 <none> 443/TCP 41m

Now you can hit:

http://<node-ip>:32423 #or whatever your NodePort is

Success, your dashboard is now running.

Dashboard Walkthrough

Let’s start with the annoying part: when you open the Polaris dashboard, the very first thing you see is a giant header linking out to all things Fairwinds/Polaris. And yes — there’s what looks like an embedded ad. Which, for extra spice, leads to a 404. Not a great first impression, but I digress.

Here’s what the dashboard actually gives you:

Overview

This section shows:

- IP address

- Overall grade

- A donut chart breaking down Passing, Warning, and Dangerous checks

None of this is clickable.

The grade is the most interesting (and the most confusing) part — but we’ll get into that later.

Insights

This appears to be the same information as the Overview, just in a slightly different layout.

There’s no new detail, no navigation, no drill-down.

Not sure what purpose this panel serves beyond filling space.

Categories

This breaks down your results into Polaris’s three buckets:

- Efficiency

- Reliability

- Security

It’s a nice view, but still not interactive.

The only clickable element is a link that takes you to the generic findings definitions in the Polaris docs — not to the specific finding, not to your workload, just the docs homepage for categories.

Namespaces / Cluster Resources

This is the part that actually works:

- You get a list of cluster-wide resources

- Below that, a list of resources grouped by namespace

- Clicking either lets you expand and see which checks passed or failed

You can also filter by namespace, which updates the Overview to show posture for only that slice of the cluster — probably the most genuinely useful interaction in the entire dashboard.

For each individual check, you’ll find a tiny “info” icon, but it just links you back to the generic Polaris docs again. No contextual explanation, no specific guidance.

TL;DR

The dashboard is functional, but limited. It's useful in small doses, but not something you’ll rely on day‑to‑day.

Understanding the Grade

The grade is the most interesting and confusing part of the Polaris dashboard. This is all about checks.

This is where I'll pretend like I'm a data scientist. But hey I did take statistics once upon a time.

You get three numbers:

- Passing — checks you passed

- Warning — checks that aren’t ideal, but not catastrophic (I am guessing)

- Dangerous — checks that are actually bad

You’ll also see a little note under the score explaining that Warnings get half the weight of dangerous checks. Sounds simple enough…:

score = Passing / (Passing + Dangerous + 0.5 * Warning)

Why this is confusing

Warnings are being scaled down (only counting as half a “bad” check)… but Passing is not being scaled in any way to match that weighting.

In other words:

- Dangerous checks hurt you at full weight

- Warnings hurt you at half weight

- Passing checks always count as full credit, even though warnings are being down-weighted in the denominator.

So you’re no longer looking at “percentage of checks passed,” or anything intuitive like that. Instead, you’re looking at a weighted penalty score, where warnings only count as half a failure, but never count as half a success.

Why I don’t get it…

If warnings are meant to be “half bad,” logically they should also be “half good.” Not doing this creates a mismatch:

- The total checks you see (e.g., 826)

≠ - The denominator used for grading (which shrinks warnings to 0.5)

The end result is a grade that sort of looks like a percentage…

A Quick Example

Let’s use simple round numbers so we can see the problem clearly.

Imagine Polaris reports:

- Passing: 700

- Warning: 76

- Dangerous: 50

- Total checks: 826

At first glance, you might think the grade is something like:

“700 out of 826 checks passed.”

But that’s not what Polaris calculates.

What Polaris Actually Calculates

Using their formula:

score = 700 / (700 + 50 + 0.5 * 76)

Compute the denominator:

- Passing = 700

- Dangerous = 50

- Half the Warnings = 38

denominator = 700 + 50 + 38 = 788

So the Polaris score becomes:

score = 700 / 788 ≈ 0.89

This isn’t “89% of checks passed.” It’s “passing divided by a weighted count of badness.” That’s why the number feels disconnected from what you see in my opinion.

Ok enough of the data science.

Testing the Admission Controller

Seriously, another admission controller?

With Polaris using our safe values file, it’s time to actually test the admission controller and see what it catches. The webhook is running in Ignore mode and scoped to a single namespace, which gives us a safe sandbox to experiment in without risking the cluster.

Create the test namespace

kubectl create namespace ac-land

kubectl label namespace ac-land ac-land=true

Everything we apply here should be intercepted by the Polaris webhook.

Deploy a “known bad” workload

Let’s start with something obviously wrong. This one has no resource limits, running as root, missing probes, the usual Kubernetes crimes:

apiVersion: v1

kind: Pod

metadata:

name: bad-pod

namespace: ac-land

spec:

containers:

- name: app

image: nginx:latest

securityContext:

runAsUser: 0

resources:

requests: {}

limits: {}

Because we’re running with:

failurePolicy: Fail

The pod will not be created because it fails some "Dangerous" checks like "Image tag should be specified." In action we see the following:

matt@cp:~/fairwinds/polaris$ kubectl apply -f bad-pod.yaml

Error from server (Forbidden): error when creating "bad-pod.yaml": admission webhook "polaris.fairwinds.com" denied the request:

Polaris prevented this deployment due to configuration problems:

- Container app: Image tag should be specified

- Container app: Should not be allowed to run as root

- Container app: Privilege escalation should not be allowed

Check what Polaris actually saw

Look at the webhook logs:

matt@cp:~/fairwinds/polaris$ kubectl logs -n polaris -l component=webhook

> /go/pkg/mod/sigs.k8s.io/controller-runtime@v0.21.0/pkg/certwatcher/certwatcher.go:139 +0x2e8

> sigs.k8s.io/controller-runtime/pkg/webhook.(*DefaultServer).Start.func1()

> /go/pkg/mod/sigs.k8s.io/controller-runtime@v0.21.0/pkg/webhook/server.go:214 +0x28

> created by sigs.k8s.io/controller-runtime/pkg/webhook.(*DefaultServer).Start in goroutine 66

> /go/pkg/mod/sigs.k8s.io/controller-runtime@v0.21.0/pkg/webhook/server.go:213 +0x28c

time="2025-11-26T19:47:32Z" level=info msg="Starting admission request"

time="2025-11-26T19:47:32Z" level=info msg="Object bad-pod has no owner - running checks"

time="2025-11-26T19:47:32Z" level=warning msg="no ResourceProvider available, check automountServiceAccountToken will not work in this context (e.g. admission control)"

time="2025-11-26T19:47:32Z" level=warning msg="no ResourceProvider available, check missingNetworkPolicy will not work in this context (e.g. admission control)"

time="2025-11-26T19:47:32Z" level=info msg="3 validation errors found when validating bad-pod"

> /go/pkg/mod/sigs.k8s.io/controller-runtime@v0.21.0/pkg/certwatcher/certwatcher.go:139 +0x2e8

> sigs.k8s.io/controller-runtime/pkg/webhook.(*DefaultServer).Start.func1()

> /go/pkg/mod/sigs.k8s.io/controller-runtime@v0.21.0/pkg/webhook/server.go:214 +0x28

> created by sigs.k8s.io/controller-runtime/pkg/webhook.(*DefaultServer).Start in goroutine 43

> /go/pkg/mod/sigs.k8s.io/controller-runtime@v0.21.0/pkg/webhook/server.go:213 +0x28c

time="2025-11-26T19:15:16Z" level=info msg="Starting admission request"

time="2025-11-26T19:15:16Z" level=info msg="Object bad-pod has no owner - running checks"

time="2025-11-26T19:15:16Z" level=warning msg="no ResourceProvider available, check automountServiceAccountToken will not work in this context (e.g. admission control)"

time="2025-11-26T19:15:16Z" level=warning msg="no ResourceProvider available, check missingNetworkPolicy will not work in this context (e.g. admission control)"

time="2025-11-26T19:15:16Z" level=info msg="3 validation errors found when validating bad-pod"

Polaris gives you some useful information, but it’s presented in a pretty strange way. You’ll see a mix of errors, partial details about which validations failed, and a few items that look like failures but are really just warnings. The webhook logs themselves aren’t exactly pleasant. They’re noisy, inconsistent, and don’t meaningfully explain what Polaris actually decided.

And the dashboard? As far as I can tell, none of this admission activity shows up there at all.

Non-Security Checks: Efficiency & Reliability

Polaris isn’t just about security; it also ships with a wide set of efficiency and reliability checks. These aren’t going to stop an attacker, but they do help catch the everyday “why is this Deployment so fragile?” issues.

These include things like:

- Missing CPU or memory requests

- Missing CPU or memory limits

- Liveness/readiness probes not defined

- Using the

latesttag - Pods without disruption budgets

These show up in the dashboard under the Efficiency and Reliability categories. The grouping is a bit high-level, but clicking into a Deployment or Pod gives you the full list of checks, their severity, and Polaris' recommendation.

While these aren’t “security” checks per se, they’re useful guardrails for teams that want basic hygiene without pulling in a more complex policy engine. Just don’t expect deep insight.

Customizing Polaris Checks

Polaris ships with a large default ruleset, but not all of it will make sense for you! Fortunately, you can tune or disable checks using a simple configuration file.

Example config.yaml

checks:

cpuRequestsMissing: warning

cpuLimitsMissing: ignore

readinessProbeMissing: danger

livenessProbeMissing: warning

tagNotSpecified: ignore

Applying It

helm upgrade --install polaris fairwinds-stable/polaris -n polaris -f values.yaml --set-file config=config.yaml

Customizing checks helps reduce noise and lets Polaris fit your environment instead of the other way around. Not such a bad thing, I guess.

Polaris in CLI & CI

Polaris can be used as a CLI or CI scanner. This is the mode where its checks are surfaced cleanly and without the noise of dashboards or webhooks.

CLI Scan Example

First install via brew locally.

brew tap FairwindsOps/tap

brew install FairwindsOps/tap/polaris

Then run against the bad-pod.yaml file from earlier.

matt.brown@matt Polaris % polaris audit --audit-path . --format=pretty

Polaris audited Path . at 2025-11-26T12:15:52-08:00

Nodes: 0 | Namespaces: 0 | Controllers: 1

Final score: 48

Pod bad-pod in namespace ac-land

metadataAndInstanceMismatched 😬 Warning

Reliability - Label app.kubernetes.io/instance must match metadata.name

hostNetworkSet 🎉 Success

Security - Host network is not configured

hostPIDSet 🎉 Success

Security - Host PID is not configured

hostPathSet 🎉 Success

Security - HostPath volumes are not configured

hostProcess 🎉 Success

Security - Privileged access to the host check is valid

missingNetworkPolicy 😬 Warning

Security - A NetworkPolicy should match pod labels and contain applied egress and ingress rules

priorityClassNotSet 😬 Warning

Reliability - Priority class should be set

procMount 🎉 Success

Security - The default /proc masks are set up to reduce attack surface, and should be required

topologySpreadConstraint 😬 Warning

Reliability - Pod should be configured with a valid topology spread constraint

automountServiceAccountToken 😬 Warning

Security - The ServiceAccount will be automounted

hostIPCSet 🎉 Success

Security - Host IPC is not configured

Container app

sensitiveContainerEnvVar 🎉 Success

Security - The container does not set potentially sensitive environment variables

tagNotSpecified ❌ Danger

Reliability - Image tag should be specified

hostPortSet 🎉 Success

Security - Host port is not configured

linuxHardening 😬 Warning

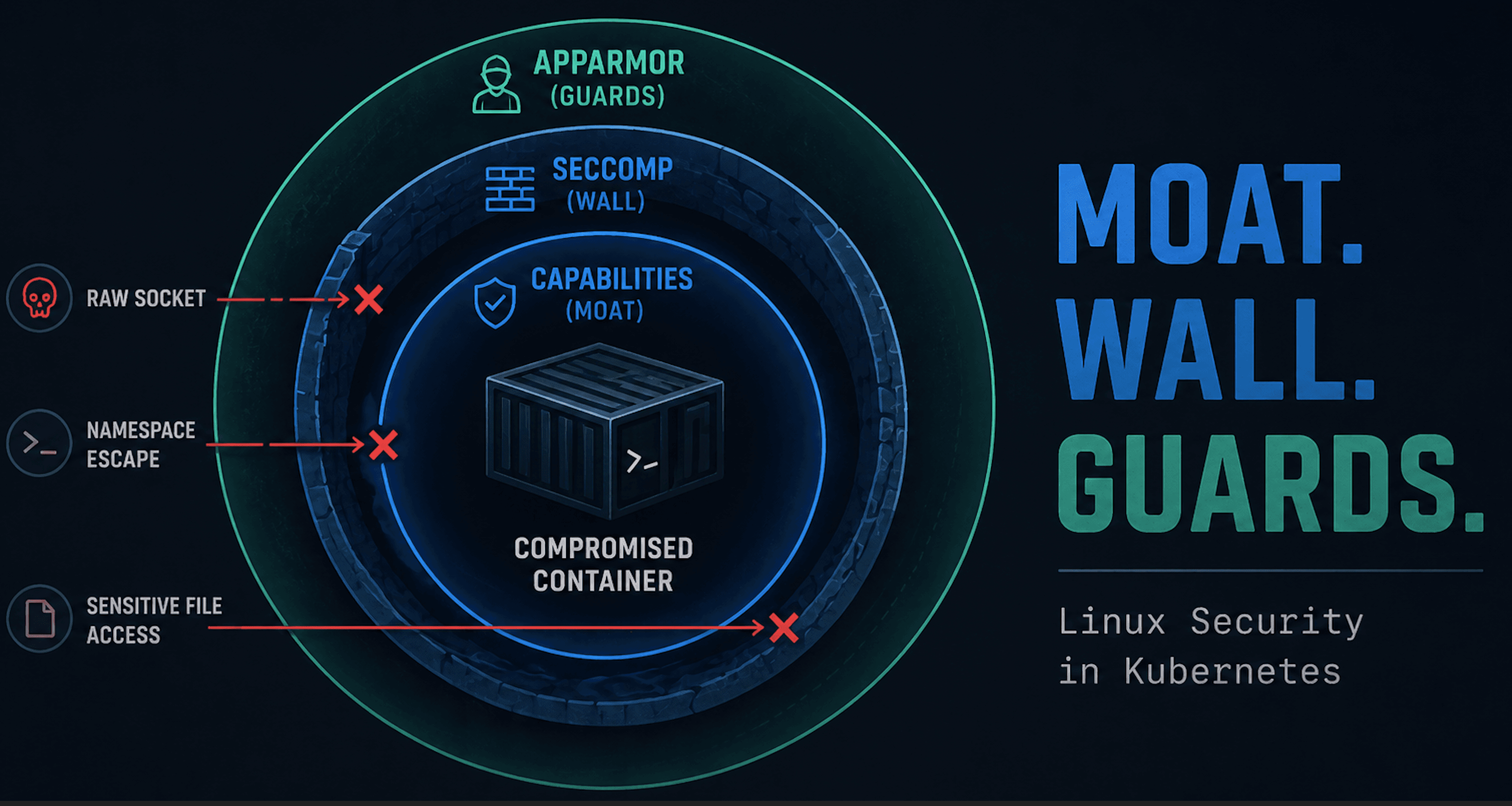

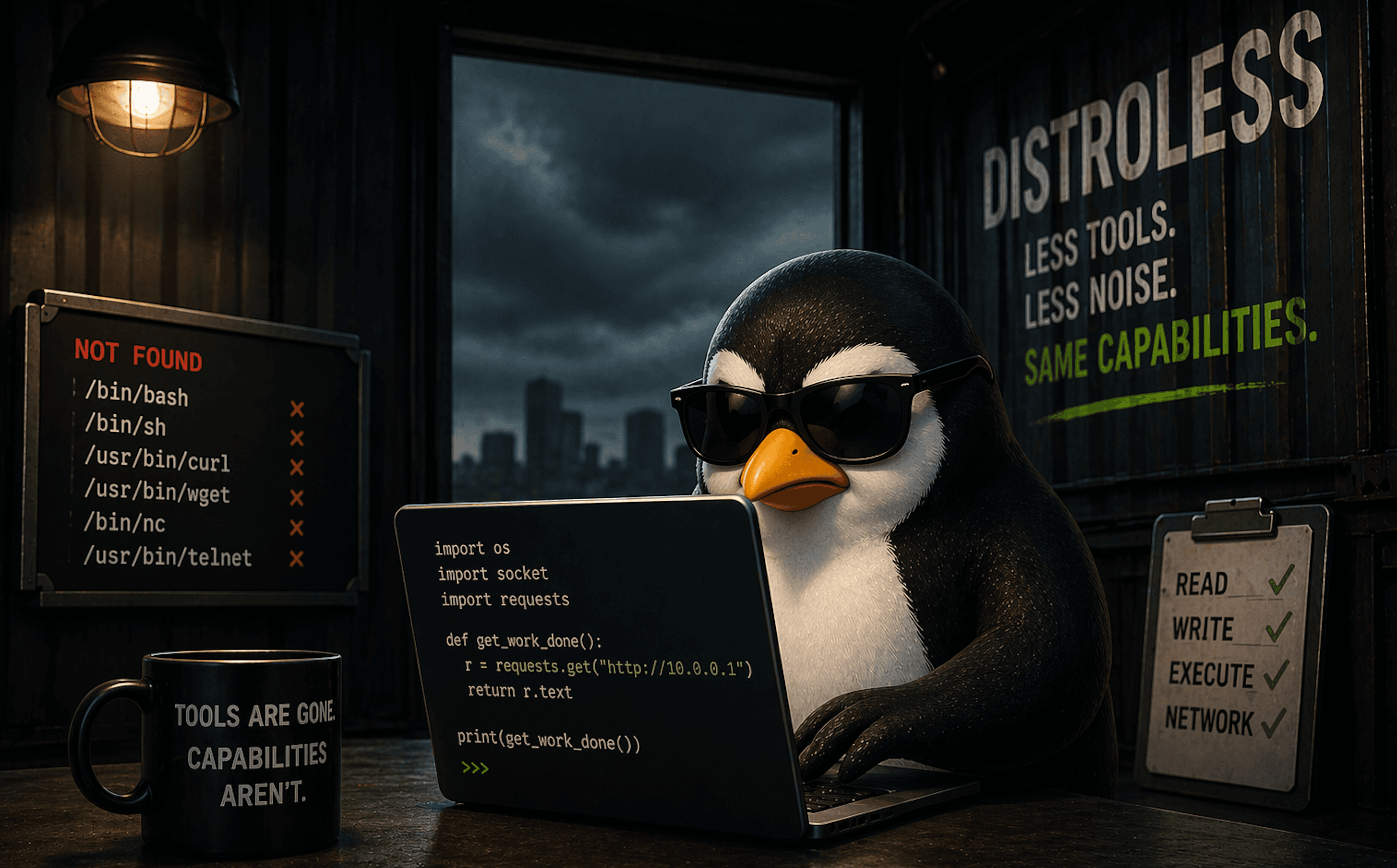

Security - Use one of AppArmor, Seccomp, SELinux, or dropping Linux Capabilities to restrict containers using unwanted privileges

pullPolicyNotAlways 😬 Warning

Reliability - Image pull policy should be "Always"

insecureCapabilities 😬 Warning

Security - Container should not have insecure capabilities

memoryRequestsMissing 😬 Warning

Efficiency - Memory requests should be set

privilegeEscalationAllowed ❌ Danger

Security - Privilege escalation should not be allowed

cpuLimitsMissing 😬 Warning

Efficiency - CPU limits should be set

dangerousCapabilities 🎉 Success

Security - Container does not have any dangerous capabilities

livenessProbeMissing 😬 Warning

Reliability - Liveness probe should be configured

memoryLimitsMissing 😬 Warning

Efficiency - Memory limits should be set

notReadOnlyRootFilesystem 😬 Warning

Security - Filesystem should be read only

readinessProbeMissing 😬 Warning

Reliability - Readiness probe should be configured

runAsPrivileged 🎉 Success

Security - Not running as privileged

runAsRootAllowed ❌ Danger

Security - Should not be allowed to run as root

cpuRequestsMissing 😬 Warning

Efficiency - CPU requests should be set

Polaris acts like a lightweight linter for Kubernetes YAML. It's fast, easy to plug in, and gives clear feedback on both security and reliability issues before anything ever hits version control or your cluster. Nice touch with the emojis, at least.

Bonus: A Quick Look at Pluto (Outdated API Checker)

Pluto is a companion Fairwinds tool that identifies deprecated or soon‑to‑be‑removed Kubernetes API versions. It’s perfect for catching upcoming breakage before your next cluster upgrade.

Install Pluto on Linux (ARM64)

# 1. Download the ARM64 binary

wget https://github.com/FairwindsOps/pluto/releases/download/v5.22.6/pluto_5.22.6_linux_arm64.tar.gz

# 2. Extract the archive

tar -xvf pluto_5.22.6_linux_arm64.tar.gz

# 3. Make it executable

chmod +x pluto

# 4. Verify

./pluto version

You should see something like:

Version:5.22.6 Commit:27a470e10b07302fba2d5a2e6817a08a2b87c0c3

Test Pluto Using a Deprecated API (FlowSchema v1beta3)

Save this as fc.yaml:

apiVersion: flowcontrol.apiserver.k8s.io/v1beta3

kind: FlowSchema

metadata:

name: deprecated-flowschema

spec:

priorityLevelConfiguration:

name: workload-high

matchingPrecedence: 1000

distinguisherMethod:

type: ByUser

rules:

- subjects:

- kind: User

user:

name: system:serviceaccount:default:default

resourceRules:

- verbs: ["*"]

apiGroups: ["*"]

resources: ["*"]

nonResourceRules:

- verbs: ["*"]

nonResourceURLs: ["*"]

Against the file you’ll see a deprecation warning.

matt@cp:~/fairwinds/polaris$ ./pluto detect-files -f fc.yaml

NAME KIND VERSION REPLACEMENT REMOVED DEPRECATED REPL AVAIL

deprecated-flowschema FlowSchema flowcontrol.apiserver.k8s.io/v1beta3 flowcontrol.apiserver.k8s.io/v1 false true false

Pluto in your running cluster

Pluto can also be run against your live cluster. While this is useful for older cluster versions, against a 1.33 cluster you shouldn't see anything.

matt@cp:~/fairwinds/polaris$ kubectl version

Client Version: v1.33.6

Kustomize Version: v5.6.0

Server Version: v1.33.6

matt@cp:~/fairwinds/polaris$ ./pluto detect-all-in-cluster -o wide 2>/dev/null

There were no resources found with known deprecated apiVersions.

Wrap Up

If you’ve made it this far, I commend you. Writing this post felt a bit like sitting five minutes into a panel interview and realizing this isn’t the right candidate, but you still push through out of courtesy.

Polaris is a lightweight posture tool that offers a very surface-level read on workload quality. The dashboard looks fine but doesn’t tell you much, the admission controller functions but provides almost no visibility, and the CLI has pockets of usefulness if you really need a YAML linter with opinions. Furthermore, there isn't really a clear standard it is being evaluated against. Is this compliance, best practice, or something else?

But the reality is simple: there isn’t much here that provides meaningful or lasting value. It’s not deep, it’s not insightful, and it’s not something I’d recommend beyond casual curiosity.