Signed, Sealed, and Admitted

Image Trust with Cosign and Kyverno

Working as a solutions architect while going deep on Kubernetes security — prevention-first thinking, open source tooling, and a daily rabbit hole of hands-on learning. I make the mistakes, then figure out how to fix them (eventually).

Kubernetes does a lot of things automatically — scheduling, networking, scaling. But trust isn’t one of them. If someone pushes an image to a registry with your project’s name on it, Kubernetes won’t ask questions. It’ll just pull and run.

Of course, that’s not exactly ideal. A single problematic image can skip right past scanning gates and land in production because the cluster never checked where it came from.

Fortunately, there’s an easy way to fix that: image signing.

Image signing proves who built an image and that it hasn’t changed since. Cosign (part of the Sigstore project) handles the signing and verification piece, giving your container images a verifiable identity. Kyverno, meanwhile, enforces that trust boundary inside the cluster — it can block any workload whose image isn’t signed by a trusted key.

In this post, we’ll:

- Use Cosign to sign and verify a container image manually.

- Create a Kyverno policy that rejects unsigned workloads.

- Add a tiny GitHub Action so every new build is automatically signed before deployment.

No lengthy PKI setup — just practical, auditable trust you can drop into any Kubernetes cluster today.

Why Image Signing Matters

Let's run through a very simple example of why you might care about this whole signing thing.

Say you’ve got a clean build pipeline. Your team pushes to ghcr.io/company/backend:latest and Kubernetes pulls it straight into production. Everyone trusts that tag.

Then one day you spin up a fork, test a quick change, and push it back to the same tag. Oops. The registry accepts it. The cluster redeploys automatically. Now the cluster is running this somewhat mysterious thing.

Nothing malicious happened. There was no exploit, no compromised credential—just an overly trusted tag and a missing signature.

That’s what image signing solves. Instead of trusting that “latest” means yours, you trust that it’s signed by someone you actually know. Cosign adds that proof. Kyverno enforces it before anything runs.

Before we dive in, you can see this in action with a public example. Chainguard maintains a registry called cgr.dev, which hosts signed, minimal container images. Every image there—like cgr.dev/chainguard/nginx—is verifiable using Cosign and Sigstore’s transparency log.

Running a basic check with OIDC (don't worry we'll get to setting this all up soon):

matt.brown@matt ~ % cosign verify \

--certificate-identity-regexp "https://github.com/chainguard-images/images/.*" \

--certificate-oidc-issuer "https://token.actions.githubusercontent.com" \

cgr.dev/chainguard/nginx

Verification for cgr.dev/chainguard/nginx:latest --

The following checks were performed on each of these signatures:

- The cosign claims were validated

- Existence of the claims in the transparency log was verified offline

- The code-signing certificate was verified using trusted certificate authority certificates

You’ll see Cosign validate the signature and confirm it was signed through GitHub’s OIDC workflow. That’s what we’re building toward: verifiable image trust that proves where your workloads come from.

Setup Cosign

Before we sign anything, we need to make sure Cosign is installed and working locally.

Install Cosign on macOS

The easiest way to get Cosign on macOS is with Homebrew. The catch? Homebrew currently ships Cosign 3.x, which switched from creating separate .sig files to storing signatures as OCI bundles.

That change is great for the future, but today it breaks verification with Kyverno (and a few other tools that still expect legacy .sig tags).

Installing the newest Cosign is worse than what Alice had to experience.

If we search Homebrew, we see only one formula:

brew search cosign

==> Formulae

cosign

So, to stay compatible with Kyverno, we’ll install Cosign 2.6.1 manually using Go.

First, install Go and make sure your $PATH includes $HOME/go/bin (skip if you already have Go):

brew install go

echo 'export PATH="$PATH:$HOME/go/bin"' >> ~/.zshrc

source ~/.zshrc

Then install Cosign 2.6.1:

go install github.com/sigstore/cosign/v2/cmd/cosign@v2.6.1

Once installed, confirm your version:

cosign version

Expected output:

______ ______ _______. __ _______ .__ __.

/ | / __ \ / || | / _____|| \ | |

| ,----'| | | | | (----`| | | | __ | \| |

| | | | | | \ \ | | | | |_ | | . ` |

| `----.| `--' | .----) | | | | |__| | | |\ |

\______| \______/ |_______/ |__| \______| |__| \__|

cosign: A tool for Container Signing, Verification and Storage in an OCI registry.

GitVersion: v2.6.1

GitCommit: unknown

GitTreeState: unknown

BuildDate: unknown

GoVersion: go1.25.3

Compiler: gc

Platform: darwin/arm64

Generating Signing Keys

Now onto generating our keys.

Cosign Generated

The easiest way to get started is to use Cosign's built in key generation capability. Generate keys using the generate-key-pair command. It will require a password for your private key.

matt.brown@matt ~ % cosign generate-key-pair

Enter password for private key:

Enter password for private key again:

Private key written to cosign.key

Public key written to cosign.pub

That gives you:

matt.brown@matt ~ % ls | grep cosign

cosign.key

cosign.pub

You can view the public key by simply opening it. Who would have thought? But we will need this later.

matt.brown@matt ~ % cat cosign.pub

-----BEGIN PUBLIC KEY-----

...

-----END PUBLIC KEY-----

You can also regenerate this at any time with

cosign public-key --key cosign.keyif you ever lose the .pub file.

Non Cosign Generated

You can also use keys generated outside of Cosign. You import them to get them Cosign formatted.

Here's a quick command to generate a new elliptic-curve private key using the P-256 curve and save it as private.pem.

openssl ecparam -name prime256v1 -genkey -noout -out private.pem

`

Then use the Cosign import capability.

matt.brown@matt ~ % cosign import-key-pair --key private.pem

Enter password for private key:

Enter password for private key again:

Private key written to import-cosign.key

Public key written to import-cosign.pub

End result is the same, we can now sign images.

Shipping Signed Images

So now we have our keys set. This means we're ready to hit the next step, which is to actually sign the image. You knew we'd get here at some point. We complete the process by pushing it to a repository.

Using Github Container Registry is my preferred way, but do it with Dockerhub or something else if you prefer.

Let's build our image. You can use any image of course, but feel free to use mine (https://github.com/sf-matt/hello-flask-signed). This is just a simple Flask app.

matt.brown@matt hello-flask-signed % docker buildx build --platform linux/amd64,linux/arm64 -t ghcr.io/sf-matt/hello-flask-signed:v1 --push .

[+] Building 5.2s (12/12) FINISHED docker:desktop-linux

=> [internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 257B 0.0s

=> [internal] load metadata for docker.io/library/python:3.12-slim

...

Now sign it.

matt.brown@matt cosign-generated-keys % cosign sign --key cosign.key sfmatt/hello-flask-signed:v1

WARNING: Image reference sfmatt/hello-flask-signed:v1 uses a tag, not a digest, to identify the image to sign.

This can lead you to sign a different image than the intended one. Please use a

digest (example.com/ubuntu@sha256:abc123...) rather than tag

(example.com/ubuntu:latest) for the input to cosign. The ability to refer to

images by tag will be removed in a future release.

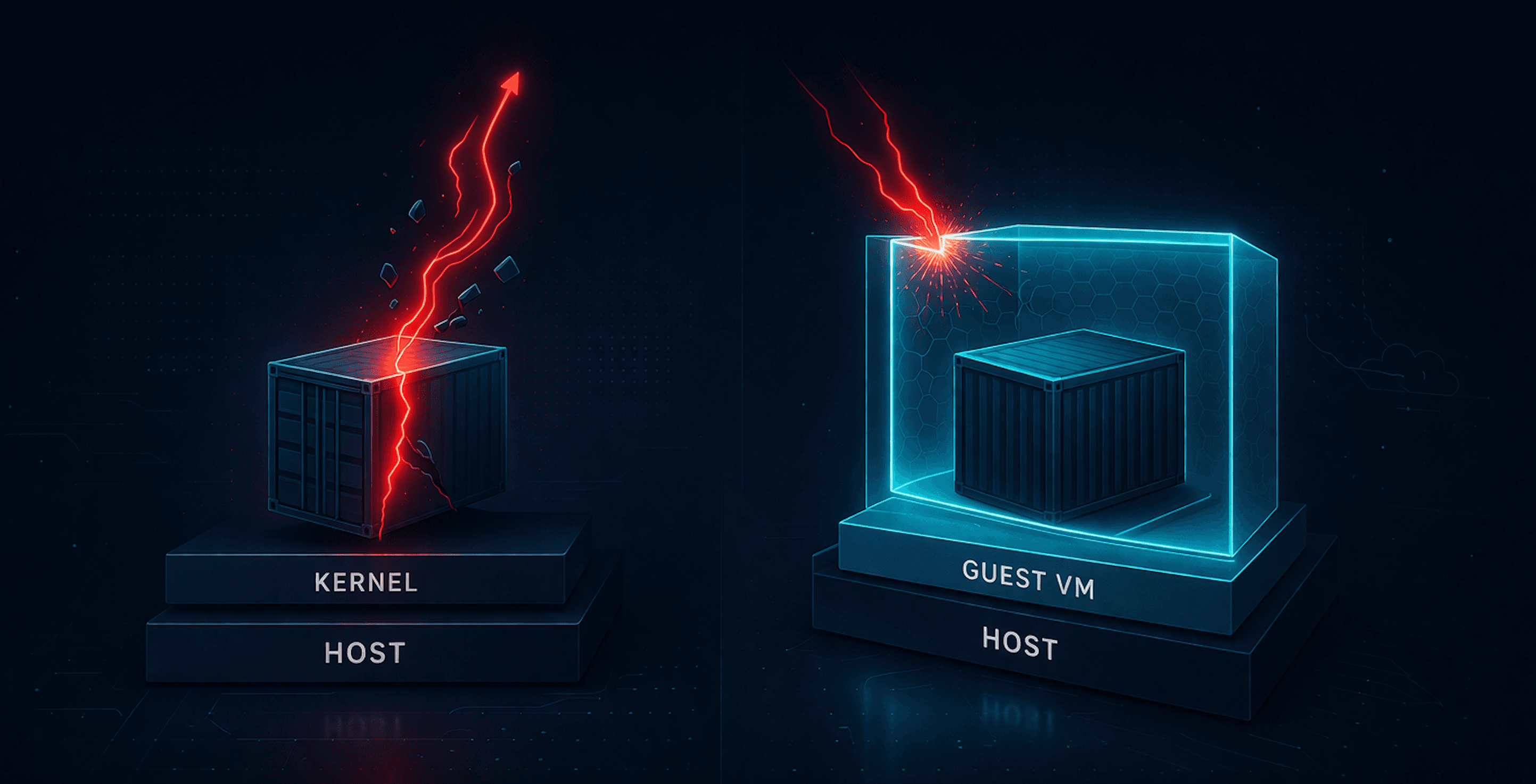

For some reason I thought signing it by tag was a smart idea. Cosign quickly shut me down, albeit they don't do the best job of explaining why. But of course the reason is fairly simple.

Tags like :v1 are mutable. If the tag later points to a new image, your old signature still looks valid for the tag, even though the underlying image changed. That breaks the entire trust model. The digest uniquely identifies that exact build. Once signed, no one can change what it points to without invalidating the signature.

Cosign solves this by signing by digest, not tag. Seems this signing by tag will not even be an option in the future. So let's grab the digest if you didn't save it and do it that way. We’ll use Crane, a lightweight CLI from Google’s go-containerregistry project that makes it easy to inspect, copy, and manipulate container images right from the terminal. Install via Brew if you don't have it.

matt.brown@matt ~ % brew install crane

matt.brown@matt hello-flask-signed % crane digest ghcr.io/sf-matt/hello-flask-signed:v1

sha256:blahblah

Take that returned value to sign.

matt.brown@matt cosign-generated-keys % cosign sign --key cosign.key ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah

Enter password for private key:

WARNING: "ghcr.io/sf-matt/hello-flask-signed" appears to be a private repository, please confirm uploading to the transparency log at "https://rekor.sigstore.dev"

Are you sure you would like to continue? [y/N] N

Interesting, what exactly does this mean. When you sign a private image, Cosign will warn you that it’s uploading metadata to the public Rekor transparency log or tlog.

What’s actually published

Cosign never uploads your image contents. From my investigation it creates just a small record containing:

the image digest (the SHA256 hash),

your signing certificate (for keyless),

and a cryptographic proof that the entry exists in the log.

This allows anyone to later verify when and by whom an image was signed. That’s great for public supply chains, but not usually necessary for internal builds.

Why we don’t need Rekor here (IMO)

If you’re just signing and verifying images you built to run in your cluster, you already control both the registry and the verification policy. Publishing to a public transparency log adds no security benefit. It just makes your internal image digests public.

Then let's skip the tlog. We can tell Cosign not to publish to Rekor when signing private images. And we add `--recursive`` to account for a multi arch image.

cosign sign --tlog-upload=false --key cosign.key --recursive ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah

You’ll still get a valid signature that Kyverno can verify, but no public audit entry. And that is it. We have our signed image. The next question is what exactly did that do.

Verifying a Signature

So far, Cosign gave us no visible proof in our terminal that we are signed. To at least confirm it is signed go ahead and verify against our public key:

matt.brown@matt cosign-generated-keys % cosign verify \

--key cosign.pub \

--insecure-ignore-tlog=true \

ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah

WARNING: Skipping tlog verification is an insecure practice that lacks transparency and auditability verification for the signature.

Verification for ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah--

The following checks were performed on each of these signatures:

- The cosign claims were validated

- The signatures were verified against the specified public key

[{"critical":{"identity":{"docker-reference":"ghcr.io/sf-matt/hello-flask-signed"},"image":{"docker-manifest-digest":"sha256:blahblah"},"type":"cosign container image signature"},"optional":null}]

That’s the confirmation. But what do we have exactly. Well to find the signature we are looking for let's do a triangulate.

matt.brown@matt cosign-generated-keys % cosign triangulate ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah

ghcr.io/sf-matt/hello-flask-signed:sha256-blahblah.sig

Ok let's see the artifact in GHCR using tree.

matt.brown@matt cosign-generated-keys % cosign tree ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah

📦 Supply Chain Security Related artifacts for an image: ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah

└── 🔐 Signatures for an image tag: ghcr.io/sf-matt/hello-flask-signed:sha256-blahblah.sig

└── 🍒 sha256:different-blahblah

You can check the Github UI for this signature as well.

What the .sig Image Actually Is

When Cosign signs an image with 2.x and before it pushes a signature artifact back into the registry. That artifact appears as a tag ending in .sig, as we saw with ghcr.io/sf-matt/hello-flask-signed:sha256-<digest>.sig. Behind the scenes, this is just an OCI manifest that contains a small JSON bundle with the signature, certificate (if keyless), and optional transparency-log proof. Cosign and tools like Kyverno automatically discover and verify this artifact when checking your image, so you never have to handle the .sig directly.

OIDC with Sigstore

For public images, we can skip local keys entirely and sign using Sigstore’s keyless mode. This super easy mode authenticates you through your GitHub identity (or a couple others) via OpenID Connect (OIDC).

Since this example uses a public image, you can follow along with mine:ghcr.io/sf-matt/hello-flask-oidc:v1.

To sign it, just run the same as before but with absolutely no keys.

cosign sign ghcr.io/sf-matt/hello-flask-oidc@sha256:04147f2536d03c40a3ac595de6c1c87f06924b775dc92442e0b3b04e5ed5793e

Cosign will open a browser window and ask you to log in with GitHub (or others). Once authenticated, it issues a short-lived signing certificate from Fulcio and uploads the signature to both the registry and the Rekor transparency log.

You’ll see a message confirming the signature and transparency log entry:

Retrieving signed certificate...

Successfully verified SCT...

tlog entry created with index: 672916270

Pushing signature to: ghcr.io/sf-matt/hello-flask-oidc

To verify the signature:

cosign verify --certificate-identity "sdmattbrown@gmail.com" --certificate-oidc-issuer "https://github.com/login/oauth" ghcr.io/sf-matt/hello-flask-oidc@sha256:<digest>

Cosign will validate the certificate, confirm its entry in Rekor, and show the signed claims. No local keys, no password prompts, just OIDC-based signing tied to your GitHub identity.

Boom.

Validating Image via Kyverno

Ok let's move to the more interesting part, K8s. We start by setting up an [ImageValidatingPolicy (https://kyverno.io/docs/policy-types/image-validating-policy/). Here is an example for our initial signed image.

If you need some guidance installing Kyverno it is just a simple Helm deploy, but more details can be found in an older post.

apiVersion: policies.kyverno.io/v1alpha1

kind: ImageValidatingPolicy

metadata:

name: ghcr-check-images

spec:

matchConstraints:

resourceRules:

- apiGroups: [""]

apiVersions: ["v1"]

operations: ["CREATE"]

resources: ["pods"]

evaluation:

background:

enabled: false

validationActions: [Deny]

failurePolicy: Ignore

attestors:

- name: cosign

cosign:

key:

data: |

-----BEGIN PUBLIC KEY-----

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEMitBUveNmKw57+UdJ3+mbGKlWp5B

oWm+HWOBKap2V0Oa2whm/IHHoqReZUPdgj+fsAGyyBvSlbbfQV44zJhx5w==

-----END PUBLIC KEY-----

validations:

- expression: >

images.containers.all(i,

(image(i).registry() == "ghcr.io" &&

image(i).repository().startsWith("sf-matt/"))

? verifyImageSignatures(i, [attestors.cosign]) > 0

: true

)

message: all images from ghcr.io/sf-matt must have a valid Cosign signature

This Kyverno ImageValidatingPolicy does the following:

Scope: Applies to all Pod

CREATEoperations.Target: Only evaluates images pulled from

ghcr.io/sf-matt.Attestor: Uses an embedded Cosign public key for signature verification.

Logic: Runs

verifyImageSignatures()on each container image.Enforcement: Denies workloads if any image from your GHCR namespace isn’t signed by that trusted key.

Behavior: Ignores other registries and disables background scans (evaluation happens only at creation).

In short: if it comes from your namespace and isn’t cryptographically signed, it never runs.

A problem will arise if your GitHub Container Registry (GHCR) is private, which we have done in this example. Kyverno needs credentials to pull and verify signatures. You can provide these using a standard Kubernetes Secret of type dockerconfigjson.

Generate a GitHub Personal Access Token (classic or fine-grained) with read:packages permission,

then create the secret in the same namespace where Kyverno runs (usually kyverno). Running it imperatively is easy enough.

kubectl create secret docker-registry ghcr-creds --docker-server=ghcr.io --docker-username=<your-github-username> --docker-password=<your-personal-access-token> --docker-email=<your-email> -n kyverno

Confirm creation:

kubectl get secret ghcr-creds -n kyverno

Then reference the secret inside your ImageValidatingPolicy using the credentials field. This tells Kyverno to use your GHCR credentials when verifying Cosign signatures for private images.

apiVersion: policies.kyverno.io/v1alpha1

kind: ImageValidatingPolicy

metadata:

name: ghcr-check-images

spec:

matchConstraints:

resourceRules:

- apiGroups: [""]

apiVersions: ["v1"]

operations: ["CREATE"]

resources: ["pods"]

evaluation:

background:

enabled: false

validationActions: [Deny]

failurePolicy: Ignore

credentials:

providers:

- "github"

- "default"

secrets:

- "ghcr-creds"

attestors:

- name: cosign

cosign:

key:

data: |

-----BEGIN PUBLIC KEY-----

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEoYIRqyJPEOGk84mh9W3XWA42dOPm

UE03IhLs2sLnRPegfWAO+6mSy8pbEO8R5orKIXqHWq2fz8s6UG9iTXbaRQ==

-----END PUBLIC KEY-----

ctlog:

insecureIgnoreTlog: true

url: "https://rekor.sigstore.dev"

validations:

- expression: >

images.containers.all(i,

(image(i).registry() == "ghcr.io" &&

image(i).repository().startsWith("sf-matt/"))

? verifyImageSignatures(i, [attestors.cosign]) > 0

: true

)

message: all images from ghcr.io/sf-matt must have a valid Cosign signature

But here's the problem I've run into. It somehow looks for secrets as a cluster-wide object. If you turn up the logs you can see the following.

2025-11-03T22:22:02Z -5 k8s.io/client-go@v0.33.3/transport/round_trippers.go:632 > Response logger=klog milliseconds=1 status="404 Not Found" url=https://10.96.0.1:443/api/v1/secrets/ghcr-creds v=6 verb=GET

So while it will work if you switch the image to public, that sorta defeats the point of what we're trying here. So let's switch to a good old ClusterPolicy with verifyImages.

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: check-ghcr-image

spec:

webhookConfiguration:

failurePolicy: Fail

timeoutSeconds: 30

background: false

rules:

- name: check-ghcr-image

match:

any:

- resources:

kinds:

- Pod

verifyImages:

- imageReferences:

- "ghcr.io/sf-matt/hello-flask*"

failureAction: Enforce

attestors:

- entries:

- keys:

publicKeys: |-

-----BEGIN PUBLIC KEY-----

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEoYIRqyJPEOGk84mh9W3XWA42dOPm

UE03IhLs2sLnRPegfWAO+6mSy8pbEO8R5orKIXqHWq2fz8s6UG9iTXbaRQ==

-----END PUBLIC KEY-----

rekor:

ignoreTlog: true

url: https://rekor.sigstore.dev

pubkey: |-

-----BEGIN PUBLIC KEY-----

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEoYIRqyJPEOGk84mh9W3XWA42dOPm

UE03IhLs2sLnRPegfWAO+6mSy8pbEO8R5orKIXqHWq2fz8s6UG9iTXbaRQ==

-----END PUBLIC KEY-----

ctlog:

ignoreSCT: true

pubkey: |-

-----BEGIN PUBLIC KEY-----

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEoYIRqyJPEOGk84mh9W3XWA42dOPm

UE03IhLs2sLnRPegfWAO+6mSy8pbEO8R5orKIXqHWq2fz8s6UG9iTXbaRQ==

-----END PUBLIC KEY-----

And now you should be able to deploy it just fine. And if you have Policy Reporter running you can see it pass.

You actually wouldn’t have been able to see that for ImageValidatingPolicy. Another plus for ClusterPolicy.

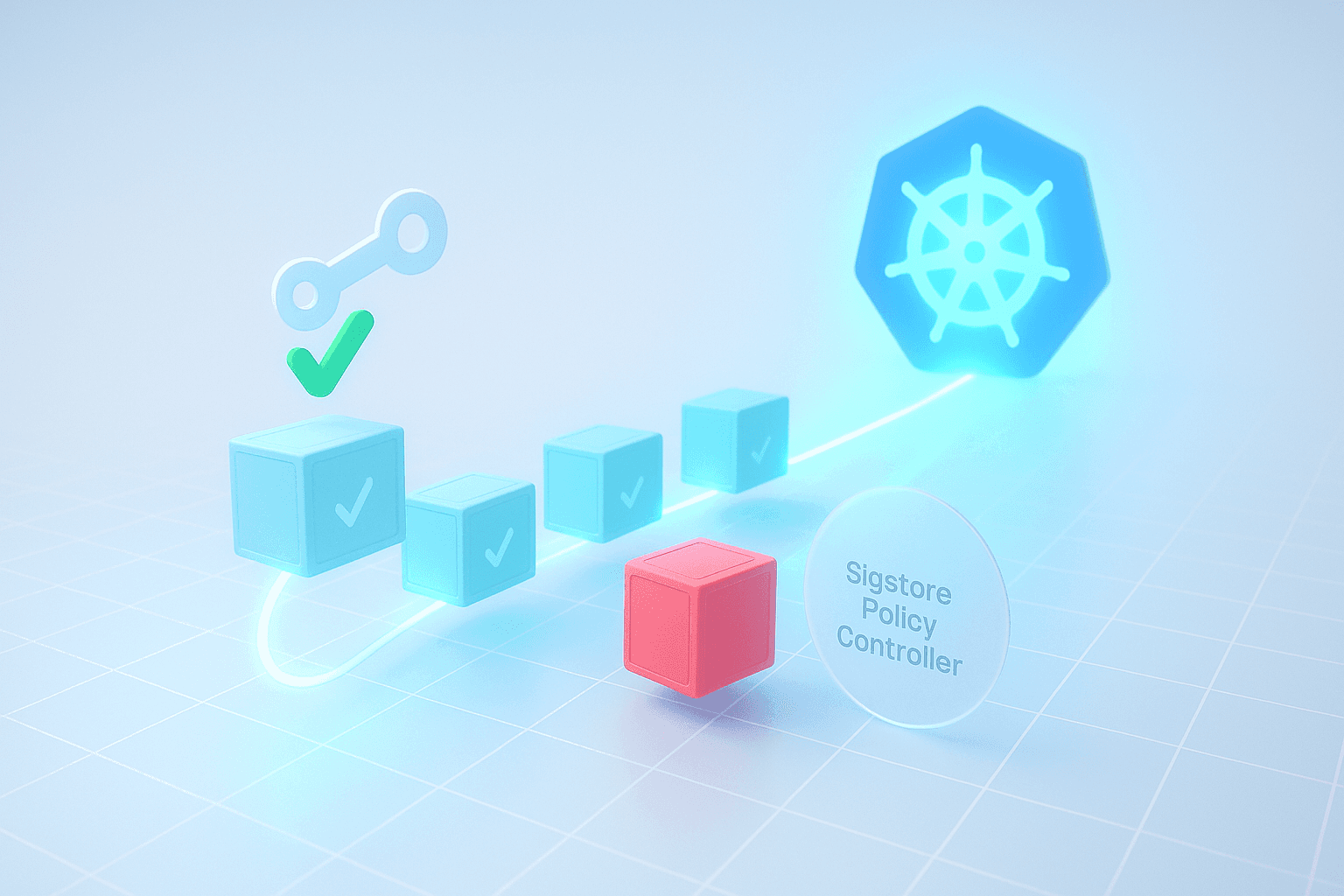

Sigstore Policy Controller

Going through the process of validating with Kyverno feels quite clunky. So let's try another way.

In the Sigstore docs I found their Policy Controller, which is just an admission controller. So let's try using Sigstore policy-controller to accomplish the same as what we did with Kyverno.

We'll start by using the cosign generated keypair from before or you can create a new one. You could reuse the previous app or create a new one.

Install Policy-Controller

Let's get started with installing policy-controller.

helm repo add sigstore https://sigstore.github.io/helm-charts

helm repo update

helm upgrade --install policy-controller sigstore/policy-controller -n cosign-system --create-namespace

Then you need to label the namespace to test.

Beware that when you do this if you have no policy for an image you will be blocked from deploying that image.

kubectl label namespace default policy.sigstore.dev/include=true

Now we have our newest Admission Controller installed. Let's create a ClusterImagePolicy that requires your keypair signature. Inline the public key (PEM).

apiVersion: policy.sigstore.dev/v1beta1

kind: ClusterImagePolicy

metadata:

name: require-cosign-keypair

spec:

mode: enforce

images:

- glob: ghcr.io/sf-matt/hello-flask*

authorities:

- key:

data: |

-----BEGIN PUBLIC KEY-----

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEoYIRqyJPEOGk84mh9W3XWA42dOPm

UE03IhLs2sLnRPegfWAO+6mSy8pbEO8R5orKIXqHWq2fz8s6UG9iTXbaRQ==

-----END PUBLIC KEY-----

Deploy your new policy.

kubectl apply -f require-cosign-keypair.yaml

Then try deploying the same workload. It should deploy fine. You can see the validations by looking at the logs.

kubectl -n cosign-system logs deploy/policy-controller-webhook

You should see something like the following:

{...Validated 1 policies for image ghcr.io/sf-matt/hello-flask-signed@sha256:blahblah...}

And that is that. It works quite easily. No issues with image pull secrets and no complex CEL expressions (although we dropped that with a ClusterPolicy). Although it is not ideal to have another Admission Controller, their very nature allows them to stack. So something definitely worth considering. And the overhead of the one pod is not too high.

Limits:

cpu: 200m

memory: 512Mi

Requests:

cpu: 100m

memory: 128Mi

Wrap Up

Kubernetes will happily run whatever you hand it — no questions asked. It doesn’t check signatures, provenance, or who actually built the thing. It’s the ultimate easy button.

Image signing is the missing trust layer most teams skip, even though it’s absurdly simple to add. Sure, it’s CI-friendly too, but this wasn’t meant to be another “here’s a GitHub Action” post.

With Cosign, you can give every build a verifiable identity. Whether that's through your own keypair or keyless signing tied to GitHub’s OIDC workflow. With Kyverno, you can draw clear boundaries around what’s allowed to run in the cluster. And with Sigstore Policy Controller, you can tighten that loop with much simpler and more direct policies.

Together, they turn the Kubernetes API into an actual supply-chain checkpoint. If something shows up unsigned, tampered with, or built outside your pipelines, it simply doesn’t start.

The best part? It’s dead simple. All open source, all auditable, and built on the same foundations powering modern supply-chain security.

So go for the low-hanging fruit — start by making sure your cluster only runs what’s been signed and proven to be yours.