Access Control, Actually: Teleport To the Rescue

Simplifying Kubernetes RBAC Using Teleport

Working as a solutions architect while going deep on Kubernetes security — prevention-first thinking, open source tooling, and a daily rabbit hole of hands-on learning. I make the mistakes, then figure out how to fix them (eventually).

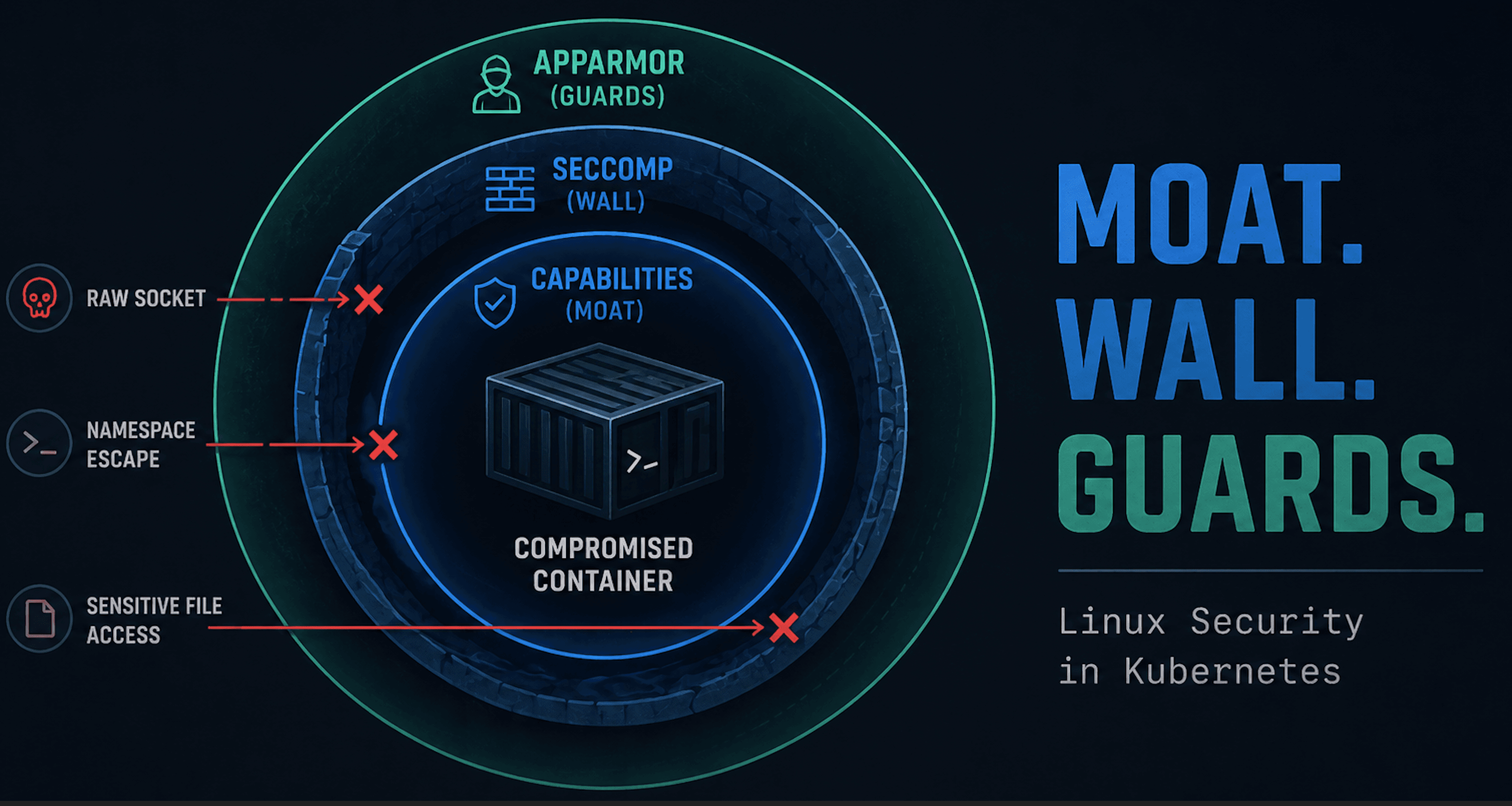

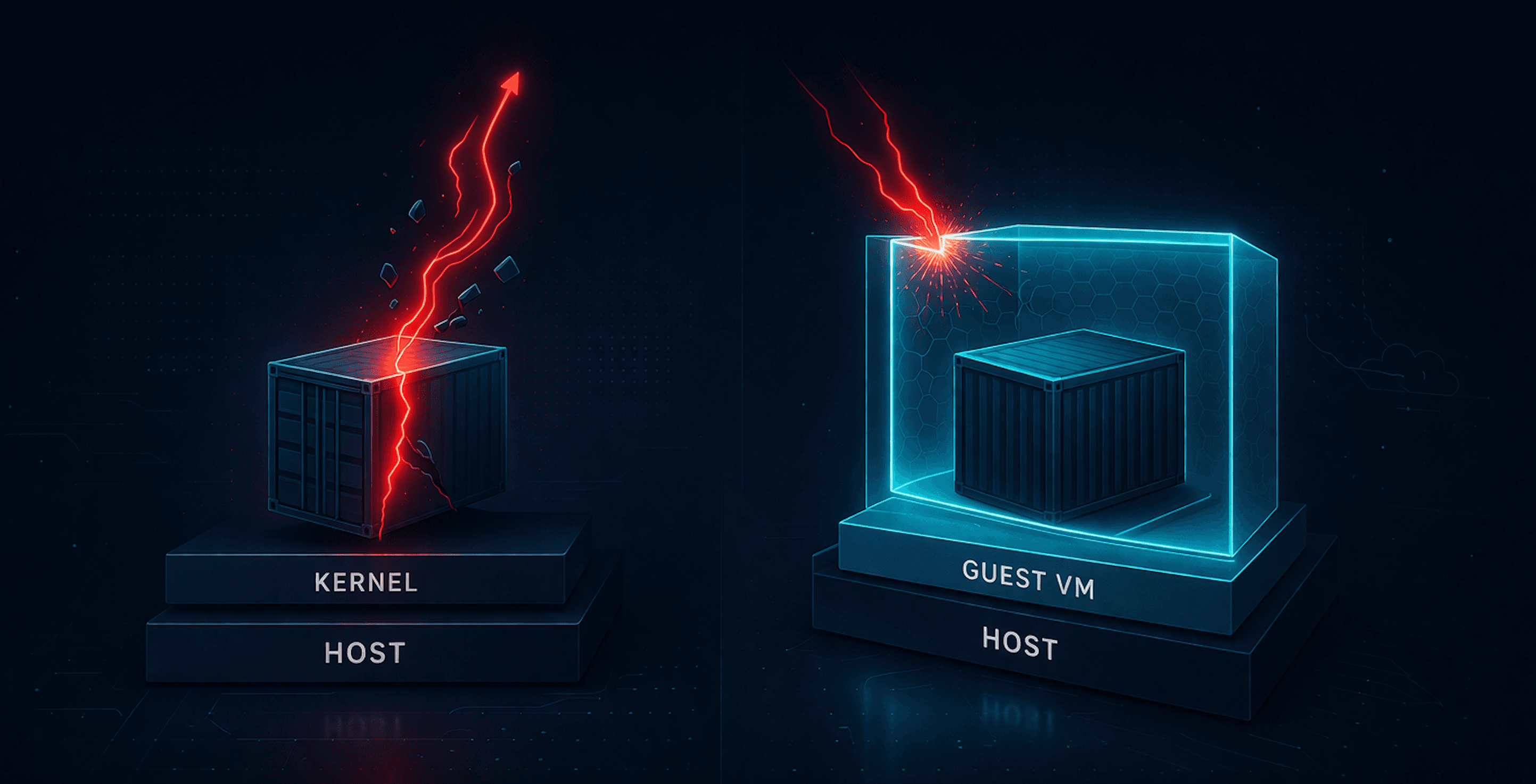

Last time, we walked the whole chain of Kubernetes access — from SSH on the node to the default service account that every Pod inherits. That exercise made one thing clear: Kubernetes doesn’t have a single front door. It has a series of loosely connected locks, and most of them assume you’ll do the right thing.

RBAC gives us policy, not identity. It answers what someone can do, not who they are. And that’s where setups can easily stop. Developers authenticate to Okta or GitHub, grab a kubeconfig from somewhere, and the cluster happily trusts whatever cert that file presents. In other words, Kubernetes leaves the identity problem unsolved.

There has to be a better way, right?

What if every access request were tied to a real human identity — backed by short-lived credentials and logged end to end — without touching the Kubernetes API?

That’s what Teleport does. It takes the same basic primitives (certificates, RBAC, and Kubernetes’ native API) and layers an auditable, identity-aware access proxy on top.

In this post, we’ll set up a local Teleport instance, connect it to a cluster, and replace our kubeconfig with short-lived, verifiable identity. Sounds pretty good.

Note on Licensing Teleport’s open-source edition is released under AGPL-3.0, which (according to my research) means that if you modify and run it as a network service for others, you’re expected to share your source code. For most personal labs and internal deployments, this isn’t an issue. I’ll cover open-source licensing in more detail in a separate post. It’s a surprisingly interesting topic.

The Lab Setup (Welcome to Hell)

Ugh, this was not a fun exercise. Of course at the end it was so easy to understand. If you want to do this in a local environment the instructions should work a charm. The instructions from the Teleport docs mostly work, but of course not perfectly.

I started simple: one Teleport proxy in Docker and a self-signed certificate with mkcert. Nothing fancy, no external dependencies. This was all done on my kubeadm controlplane node.

Install mkcert

sudo apt install mkcert

mkcert -install

Next create a cert folder where we store certs and share with our Docker container. Also add the mkcert CA to that folder. The trickiest part was getting the cert right. setting it to the IP address of the Controlplane node was the key.

mkdir teleport-tls

cd teleport-tls

mkcert 192.168.64.4 #Or your node IP

cp "$(mkcert -CAROOT)/rootCA.pem" .

Spin Up Docker

Now we have to spin up our Teleport Docker instance. Of course make sure Docker is installed on your node.

docker run -it -v .:/etc/teleport-tls -p 3080:443 ubuntu:22.04

Install Teleport inside the container

apt-get update && apt-get install -y curl

cp /etc/teleport-tls/rootCA.pem /etc/ssl/certs/mkcertCA.pem

curl https://cdn.teleport.dev/install.sh | bash -s 18.2.4

Then generate a config file with the generated certs:

teleport configure -o file \

--cluster-name=teleport \

--public-addr=192.168.64.4:3080 \

--cert-file=/etc/teleport-tls/192.168.64.4.pem \

--key-file=/etc/teleport-tls/192.168.64.4-key.pem

Finally, start it up:

teleport start --config=/etc/teleport.yaml

That’s your full access proxy running locally. The Teleport web UI comes up at https://192.168.64.4:3080 (or whatever IP address you have).

Create your first user

Fire up another terminal and connect to your Docker container, which you can find by the usual Docker command:

matt@controlplane:~$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

7867833a79e8 ubuntu:22.04 "/bin/bash" 3 days ago Up 27 minutes 0.0.0.0:3080->443/tcp, [::]:3080->443/tcp heuristic_sammet

matt@controlplane:~$ docker exec -it 7867833a79e8 bash

root@7867833a79e8:/#

Then create a user for the Teleport UI (I kept the logins from the docs, but you don't need them).

tctl users add teleport-admin --roles=editor,access --logins=root,ubuntu,ec2-user

You’ll get a signup link. Open it in your browser to complete the setup and enable OTP. Annoying, but it gets worse later.

We're making good progress.

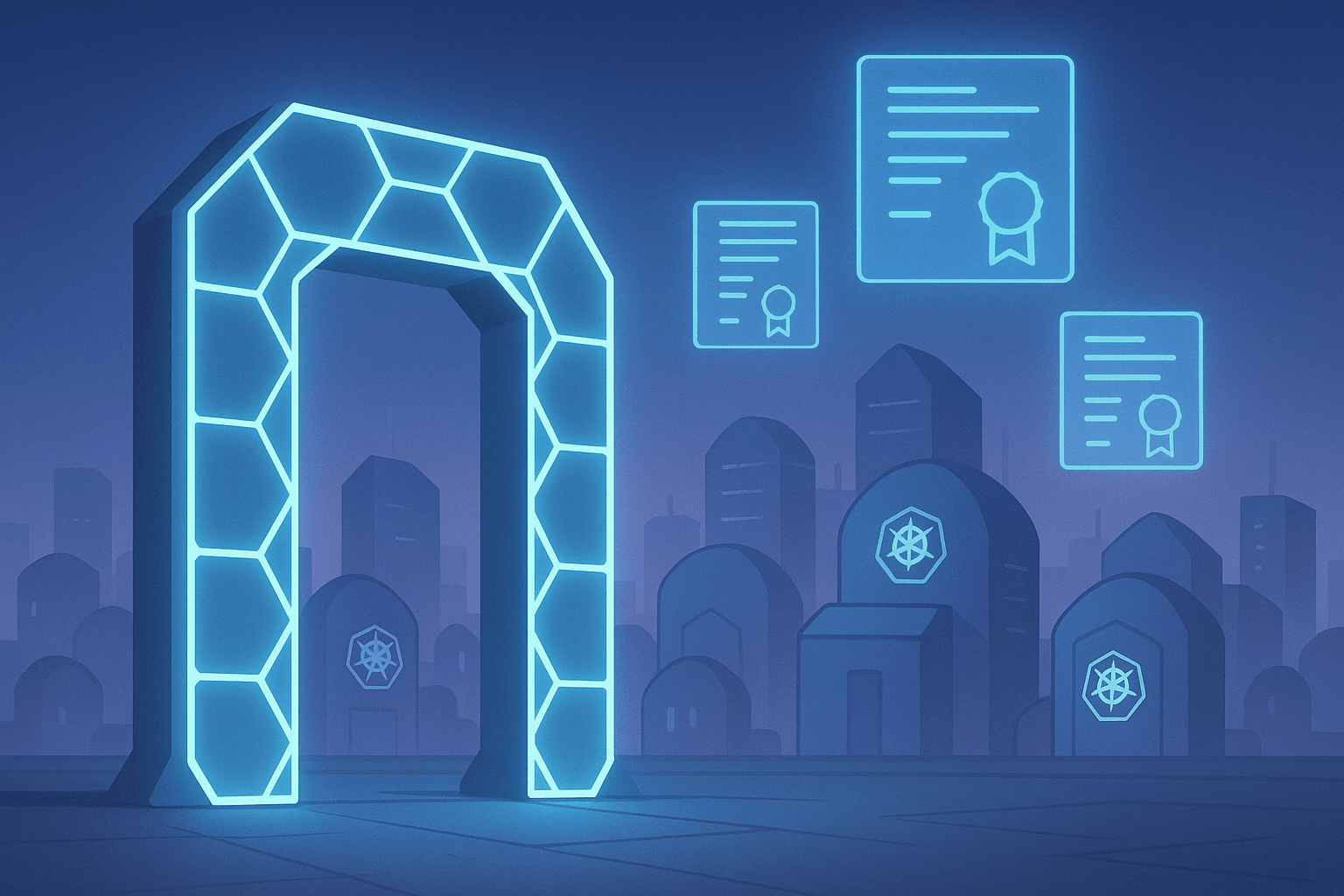

Integrating Kubernetes with Teleport

You'll notice a lot of resource options in the UI, but we'll stick with Kubernetes. On the Kubernetes side, it just requires you to follow the resource enrollment process.

Enroll Kubernetes Resource

Install the helm chart.

helm repo add teleport https://charts.releases.teleport.dev && helm repo update

Configure the cluster values and you'll get a command like follows, that you run in your terminal.

cat << EOF > prod-cluster-values.yaml

roles: kube,app,discovery

authToken: 3bdc40c408f1cd8809daeadfd83202e4

proxyAddr: 192.168.64.4:3080

kubeClusterName: kubernetes

labels:

teleport.internal/resource-id: d5319a0c-5db5-4916-9984-8a598f2ae740

EOF

helm install teleport-agent teleport/teleport-kube-agent -f prod-cluster-values.yaml --version 18.2.4 \

--create-namespace --namespace teleport

You might change it to helm upgrade --install instead. You never know if you'll have to run it again.

Once the agent registers successfully, it appears in the Teleport UI under Kubernetes Clusters.

We'll come back to this.

Connect Client

Now we need to install our client. I went to a completely different Ubuntu machine that had no kubeconfig but was still on the same network.

Install Teleport client

sudo apt install -y apt-transport-https

curl https://deb.releases.teleport.dev/teleport-pubkey.asc | sudo tee /usr/share/keyrings/teleport-archive-keyring.asc

echo "deb [signed-by=/usr/share/keyrings/teleport-archive-keyring.asc] https://deb.releases.teleport.dev/ stable main" | sudo tee /etc/apt/sources.list.d/teleport.list

sudo apt update && sudo apt install teleport

Verify:

matt@linux-server-1:~$ tsh version

Teleport v18.2.4 git:v18.2.4-0-gb7ab869 go1.24.7

Cool we're all set. Except you probably don't have kubectl! So one more step.

sudo apt-get update

sudo apt-get install -y apt-transport-https ca-certificates curl gnupg

curl -fsSL https://pkgs.k8s.io/core:/stable:/v1.34/deb/Release.key | sudo gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

sudo chmod 644 /etc/apt/keyrings/kubernetes-apt-keyring.gpg

echo 'deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://pkgs.k8s.io/core:/stable:/v1.34/deb/ /' | sudo tee /etc/apt/sources.list.d/kubernetes.list

sudo chmod 644 /etc/apt/sources.list.d/kubernetes.list

sudo apt-get update

sudo apt-get install -y kubectl

Login via Teleport

Now for the finale. In the Teleport UI, select your Kubernetes resource and copy the tsh command shown under “Connect”.

Run it to connect:

matt@linux-server-1:~$ tsh login --proxy=192.168.64.4:3080 --auth=local --user=teleport-admin teleport

ERROR: WARNING:

The proxy you are connecting to has presented a certificate signed by a

unknown authority. This is most likely due to either being presented

with a self-signed certificate or the certificate was truly signed by an

authority not known to the client.

If you know the certificate is self-signed and would like to ignore this

error use the --insecure flag.

If you have your own certificate authority that you would like to use to

validate the certificate chain presented by the proxy, set the

SSL_CERT_FILE and SSL_CERT_DIR environment variables respectively and try

again.

If you think something malicious may be occurring, contact your Teleport

system administrator to resolve this issue.

Oops bad cert, so let's bypass that and login in with your password and OTP. Note: --insecure only skips TLS validation to your local proxy, your Kubernetes traffic is still fully encrypted.

matt@linux-server-1:~$ tsh login --proxy=192.168.64.4:3080 --auth=local --user=teleport-admin teleport --insecure

Enter password for Teleport user teleport-admin:

WARNING: You are using insecure connection to Teleport proxy https://192.168.64.4:3080

Enter an OTP code from a device:

> Profile URL: https://192.168.64.4:3080

Logged in as: teleport-admin

Cluster: teleport

Roles: access, editor

Logins: root, ubuntu, ec2-user

Kubernetes: enabled

Kubernetes cluster: "kubernetes"

Kubernetes users: teleport-admin

Kubernetes groups: system:masters

Valid until: 2025-10-19 02:17:46 -0700 PDT [valid for 12h0m0s]

Extensions: login-ip, permit-agent-forwarding, permit-port-forwarding, permit-pty, private-key-policy

Switch Kubernetes context and you can see the nodes:

matt@linux-server-1:~$ tsh kube login kubernetes --insecure

matt@linux-server-1:~/teleport$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

controlplane Ready control-plane 366d v1.31.8

kubeworker1 Ready <none> 258d v1.31.8

And there it is — the aha moment. No static ~/.kube/config, just short-lived, identity-based access that expires when it should. I promise this would be way easier with an EKS cluster.

Dissecting the Teleport-Generated Kubernetes Context

After logging in with:

tsh login --proxy=192.168.64.4:3080 --auth=local --user=teleport-admin --insecure

You’ve now got a short-lived identity with these traits:

Teleport Cluster:

teleportUser:

teleport-adminRoles:

access, editorKubernetes: enabled

Kubernetes Cluster:

kubernetesKubernetes Groups:

system:mastersValidity: 12 hours

As we saw before, running kubectl get nodes confirms access:

controlplane Ready control-plane 366d v1.31.8

kubeworker1 Ready <none> 258d v1.31.8

What Teleport Did

Teleport issued short-lived client certificates and injected a Kubernetes context into your kubeconfig that points kubectl to Teleport’s Kubernetes proxy (:3026). Grab it as follows.

matt@linux-server-1:~$ cat .kube/config

The Teleport-generated kubeconfig section

apiVersion: v1

clusters:

- name: teleport-kube

cluster:

server: https://192.168.64.4:3026

certificate-authority-data: <base64 PEM>

contexts:

- name: teleport-admin@teleport-kube

context:

cluster: teleport-kube

user: teleport-admin@teleport-kube

namespace: default

current-context: teleport-admin@teleport-kube

users:

- name: teleport-admin@teleport-kube

user:

client-certificate-data: <base64 PEM>

client-key-data: <base64 PEM>

The server field shows that kubectl talks to the Teleport proxy rather than directly to the Kubernetes API server. Teleport validates your cert, maps your roles → K8s groups, and forwards the request securely.

Parsing Your Teleport-Injected kubeconfig

Here’s the kubeconfig your test box is using after tsh login (redacted):

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: <redacted>

server: https://192.168.64.4:3080

tls-server-name: kube-teleport-proxy-alpn.teleport.cluster.local

name: teleport

contexts:

- context:

cluster: teleport

extensions:

- extension: kubernetes

name: teleport.kube.name

user: teleport-kubernetes

name: teleport-kubernetes

current-context: teleport-kubernetes

kind: Config

preferences: {}

users:

- name: teleport-kubernetes

user:

exec:

apiVersion: client.authentication.k8s.io/v1beta1

args:

- kube

- credentials

- --kube-cluster=kubernetes

- --teleport-cluster=teleport

- --proxy=192.168.64.4:3080

- --insecure

command: /opt/teleport/system/bin/tsh

env: null

provideClusterInfo: false

Reading the kubeconfig (mostly right)

Teleport injects three major blocks — cluster, context, and user — each representing a layer in the connection chain.

Cluster

clusters:

- name: teleport

cluster:

server: https://192.168.64.4:3080

tls-server-name: kube-teleport-proxy-alpn.teleport.cluster.local

certificate-authority-data: <base64 PEM>

- server: Teleport proxy URL, not direct API server.

- tls-server-name: SNI/ALPN hint so the proxy knows you’re targeting Kubernetes.

- certificate-authority-data: CA bundle trusted for proxy cert validation.

Context

contexts:

- name: teleport-kubernetes

context:

cluster: teleport

user: teleport-kubernetes

extensions:

- name: teleport.kube.name

extension: kubernetes

current-context: teleport-kubernetes

- context: Binds Teleport cluster to Kubernetes user.

- extensions: Teleport hint for cluster name.

- current-context: The one

kubectlwill use.

User

users:

- name: teleport-kubernetes

user:

exec:

command: /opt/teleport/system/bin/tsh

args:

- kube

- credentials

- --kube-cluster=kubernetes

- --teleport-cluster=teleport

- --proxy=192.168.64.4:3080

- --insecure

apiVersion: client.authentication.k8s.io/v1beta1

- exec.command:

tshis your auth plugin. - args: Mint short-lived credentials on demand.

- apiVersion: Defines plugin schema for Kubernetes.

Each time kubectl runs, it shells out to tsh to request new ephemeral credentials. The proxy validates, maps your Teleport roles to Kubernetes groups, and forwards the request to the actual API server.

Confirming Role Mapping

Teleport roles map to Kubernetes RBAC groups. In your case:

tsh status

...

Kubernetes users: teleport-admin

Kubernetes groups: system:masters

...

This gives you cluster-admin privileges because system:masters maps to the built-in cluster-admin ClusterRoleBinding. RBAC we've already learned, but good to check.

Check it with a hacky grep — we’re searching ClusterRoleBindings for any subject bound to system:masters:

matt@controlplane:~$ kubectl get clusterrolebindings -o yaml | grep -B20 -A5 "system:masters"

name: calico-typha

namespace: calico-system

- apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

creationTimestamp: "2024-10-17T19:53:04Z"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: cluster-admin

resourceVersion: "134"

uid: 640338d1-5f25-4c59-bdca-893969ecb818

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:masters

- apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

meta.helm.sh/release-name: gatekeeper

Then you can prove this capability out.

matt@linux-server-1:~$ kubectl auth can-i --list | head -n 20

Resources Non-Resource URLs Resource Names Verbs

*.* [] [] [*]

[*] [] [*]

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

globalnetworkpolicies.projectcalico.org [] [] [get list watch create update patch delete deletecollection]

networkpolicies.projectcalico.org [] [] [get list watch create update patch delete deletecollection]

[/api/*] [] [get]

[/api] [] [get]

[/apis/*] [] [get]

[/apis] [] [get]

[/healthz] [] [get]

[/healthz] [] [get]

[/livez] [] [get]

[/livez] [] [get]

[/openapi/*] [] [get]

[/openapi] [] [get]

[/readyz] [] [get]

[/readyz] [] [get]

matt@linux-server-1:~$ kubectl auth can-i delete nodes

Warning: resource 'nodes' is not namespace scoped

yes

Not too bad so far.

Comparing Before and After Teleport

Before Teleport, your kubeconfig looked like this:

apiVersion: v1

clusters:

- cluster:

server: https://192.168.64.4:6443

certificate-authority-data: <base64 PEM>

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kubernetes-admin

name: kubernetes-admin@kubernetes

current-context: kubernetes-admin@kubernetes

users:

- name: kubernetes-admin

user:

client-certificate-data: <base64 PEM>

client-key-data: <base64 PEM>

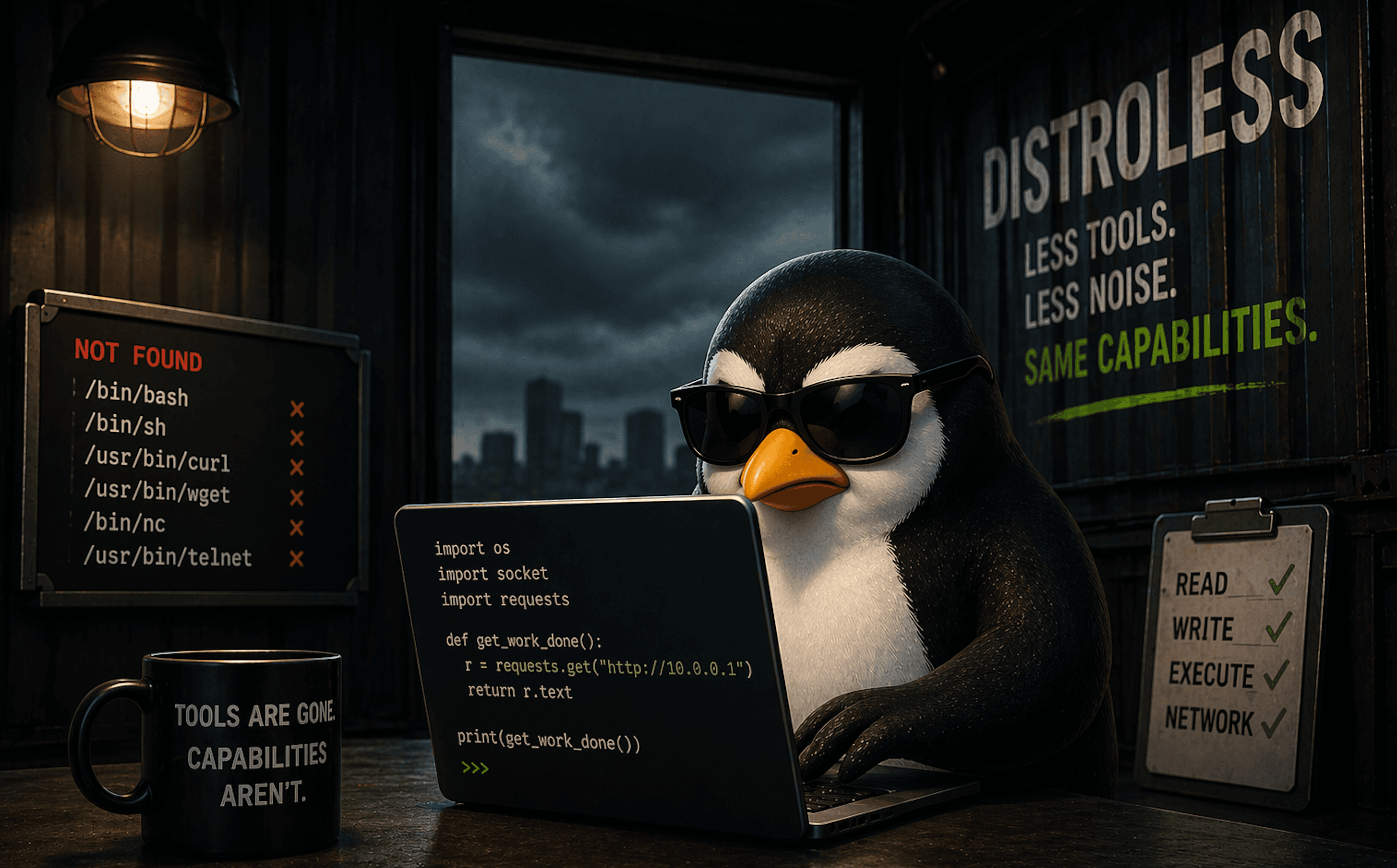

This default kubeadm config uses static client certs with no central control or expiration. If it leaks, it’s effectively unlimited admin access.

After Teleport, things are much better (no forever pass):

- Short-lived credentials via

tsh - Proxy-mediated access

- Role-based identity enforcement

- Automatic expiry & audit

| Aspect | Before Teleport | After Teleport |

| Credential type | Static admin cert | Short-lived cert via tsh |

| Endpoint | Direct to API server | Through Teleport proxy |

| Identity | Hardcoded user | Role-based (teleport-admin) |

| Expiry | Manual rotation | Auto-expires (12h) |

| Audit | None | Centralized logs & sessions |

Create New Roles and Users

We’ll now create a minimal setup:

- Teleport role → maps to a Kubernetes group

- Teleport user → inherits that role

- ClusterRoleBinding → grants the group permissions

This follows from the documentation, but also goes a little deeper.

1) Teleport Role: junior-devs → maps to K8s view.

Save the following as junior-devs.yaml. This creates Teleport role that will give our user Kubernetes access.

kind: role

version: v7

metadata:

name: junior-devs

spec:

allow:

logins: ['{{internal.logins}}']

kubernetes_groups: ['{{internal.kubernetes_groups}}']

node_labels:

'*': '*'

kubernetes_labels:

'*': '*'

kubernetes_resources:

- kind: '*'

namespace: '*'

name: '*'

verbs: ['*']

Apply on the Auth host with tctl:

root@7867833a79e8:~# tctl create junior-devs.yaml

role "junior-devs" has been created

2) Teleport User: jimbo with role junior-devs.

Save the following as jimbo.yaml. Creates a Teleport user that binds to the Teleport role of junior-devs and the Kubernetes group of teleport-view.

kind: user

version: v2

metadata:

name: jimbo

spec:

roles: ['junior-devs']

traits:

kubernetes_groups: ['teleport-view']

Apply on the Auth host with tctl:

root@7867833a79e8:~# tctl create -f jimbo.yaml

user "jimbo" has been created

To log in as jimbo, you'll need to repeat the user enrollment process again. After creating the user, go to the UI and reset authentication on the jimbo user account. This gave a new link to sign up with OTP (damn OTP, I now have way too many Teleport tokens).

Now for the actual log in:

matt@linux-server-1:~/teleport$ tsh login --proxy=192.168.64.4:3080 --auth=local --user=jimbo --insecure

Enter password for Teleport user jimbo:

WARNING: You are using insecure connection to Teleport proxy https://192.168.64.4:3080

Enter an OTP code from a device:

> Profile URL: https://192.168.64.4:3080

Logged in as: jimbo

Cluster: teleport

Roles: junior-devs

Kubernetes: enabled

Valid until: 2025-10-20 22:27:09 -0700 PDT [valid for 12h0m0s]

Extensions: login-ip, permit-port-forwarding, permit-pty, private-key-policy

matt@linux-server-1:~/teleport$ tsh kube ls

Kube Cluster Name Labels Selected

----------------- ------ --------

kubernetes

matt@linux-server-1:~/teleport$ tsh kube login kubernetes --insecure

Logged into Kubernetes cluster "kubernetes". Try 'kubectl version' to test the connection.

3) Kubernetes ClusterRoleBinding for the view group

If you don't have the ClusterRoleBinding you are sort of in a bind.

matt@linux-server-1:~/teleport$ kubectl get po

Error from server (Forbidden): pods is forbidden: User "jimbo" cannot list resource "pods" in API group "" in the namespace "default"

Since Teleport injects kubernetes_groups: ["teleport-view"], you'll need to bind that group to the built‑in view role.

Create the ClusterRoleBinding in a separate terminal.

matt@controlplane:~$ kubectl create clusterrolebinding teleport-view --clusterrole=view --group=teleport-view

clusterrolebinding.rbac.authorization.k8s.io/teleport-view created

Then verify you can use the view role.

matt@linux-server-1:~/teleport$ kubectl get po

NAME READY STATUS RESTARTS AGE

flask-app-ccb7dbb5b-5x5qw 1/1 Running 5 (45h ago) 19d

nginx-676b6c5bbc-45cmn 1/1 Running 13 (45h ago) 137d

And that's that.

Teleport UI

Teleport Role: Go to Zero Trust Access -> Roles and choose Create New Role. Then supply the name and choose Kubernetes Access with a default Kubernetes resource:

Teleport User: Go to Zero Trust Access -> Users and choose Create New User. Then fill in the name, role, and trait (kubernetes_groups).

K8s Binding: Still done in Kubernetes as above.

Status Check

This completes a minimal identity → group → RBAC pipeline: Teleport defines who Jimbo is, Kubernetes RBAC decides what Jimbo can do. Easily done via code or UI.

Teleport Kubernetes Audit

Teleport logs every Kubernetes request. You can see this both in proxy logs and the UI. Let's take a look.

This is for a simple request.

matt@linux-server-1:~$ kubectl get deployment

NAME STATUS AGE

calico-apiserver Active 367d

calico-system Active 367d

default Active 367d

...

Proxy round‑trip (reverse proxy)

You can find this in the running teleport Docker instance, when you submit a request. The following is a concise parsing of your proxy round‑trip log and the corresponding kube.request audit event (both the raw line and the UI JSON).

2025-10-20T05:03:04.563Z INFO [PROXY:PRO] Round trip completed pid:17.1 method:GET url:https://kube-teleport-proxy-alpn.teleport.cluster.local/apis/apps/v1/namespaces/default/deployments?limit=500 code:200 duration:18.776759ms tls.version:772 tls.resume:false tls.csuite:4865 tls.server:kube-teleport-proxy-alpn.teleport.cluster.local reverseproxy/reverse_proxy.go:255

Key fields (what they mean):

method:

GET— HTTP verb kubectl used.url:

.../apis/apps/v1/namespaces/default/deployments?limit=500— Exact Kubernetes API path.code:

200— Upstream API server response.tls.version / csuite / server: TLS details for the upstream hop inside Teleport (ALPN → Kube proxy).

This is the raw transport layer evidence: Teleport successfully proxied a K8s API call and got a 200 back.

UI JSON (same event)

Access the Teleport UI and you can view nicely formatted audit logs.

And clicking into details you see the JSON.

Here it is the actual request in all its glory.

{

"addr.remote": "192.168.64.8:33222",

"cluster_name": "teleport",

"code": "T3009I",

"ei": 0,

"event": "kube.request",

"kubernetes_cluster": "kubernetes",

"kubernetes_groups": [

"system:masters",

"system:authenticated"

],

"kubernetes_labels": {

"teleport.internal/resource-id": "11beab83-a0fa-48b5-8e1f-fd454a7f714c"

},

"kubernetes_users": [

"teleport-admin"

],

"login": "teleport-admin",

"namespace": "default",

"proto": "kube",

"request_path": "/apis/apps/v1/namespaces/default/deployments",

"resource_api_group": "apps/v1",

"resource_kind": "deployments",

"resource_namespace": "default",

"response_code": 200,

"server_hostname": "teleport",

"server_id": "785ed329-bb9b-4d16-8904-1b50d75377b5",

"server_labels": {

"teleport.internal/resource-id": "11beab83-a0fa-48b5-8e1f-fd454a7f714c"

},

"server_version": "18.2.4",

"sid": "",

"time": "2025-10-20T05:03:04.565Z",

"uid": "9544742b-2cfa-49d9-abab-edd7e7b06554",

"user": "teleport-admin",

"user_cluster_name": "teleport",

"user_kind": 1,

"user_roles": [

"access",

"editor"

],

"user_traits": {

"kubernetes_groups": [

"system:masters"

],

"kubernetes_users": [

"teleport-admin"

],

"logins": [

"root",

"ubuntu",

"ec2-user"

]

},

"verb": "GET"

}

A Logic of Sorts for the JSON:

Although you can surely decipher most of these, here is a rough map from a less technical point of view. Simple, clean, and fully auditable.

| Category | Fields |

| Who | user, login, user_roles, kubernetes_groups |

| What | verb, resource_kind, resource_api_group |

| Where | namespace, cluster_name, addr.remote |

| When | time, uid |

| Result | response_code |

| Server | server_id, server_hostname, server_version |

Wrap-Up: Access Control, Actually Done

That’s a wrap — for now — on Teleport and Kubernetes access.

What started as a quick experiment to make kubeconfig a little safer turned into an interesting, albeit time consuming exercise. The more I used Teleport, the more I see how it’s replacing an entire trust model.

- Short-lived certs instead of forever-tokens

- Centralized user and role management

- Audit trails that are at your fingertips

Teleport is not just for Kubernetes, but it is clearly useful for leveling up Kubernetes RBAC. Teleport isn’t trying to reinvent Kubernetes security; it’s trying to make identity-aware access sane.

I’ll probably revisit this when I start layering in SSO and stuff, but for now? It’s a clean, comprehensible access model that’s hard not to like.